As agentic AI continues its proliferation into everyday work and life, concerns about security and quality are regularly raised. Indeed, due to the fundamental nature of the multimodal models underlying commonly used products, incorrect, misleading and invalid outputs are to be expected.

A key concern is that unchecked production of false or low-quality information and inefficient or ineffective code will degrade processes over time. I think it’s a valid one.

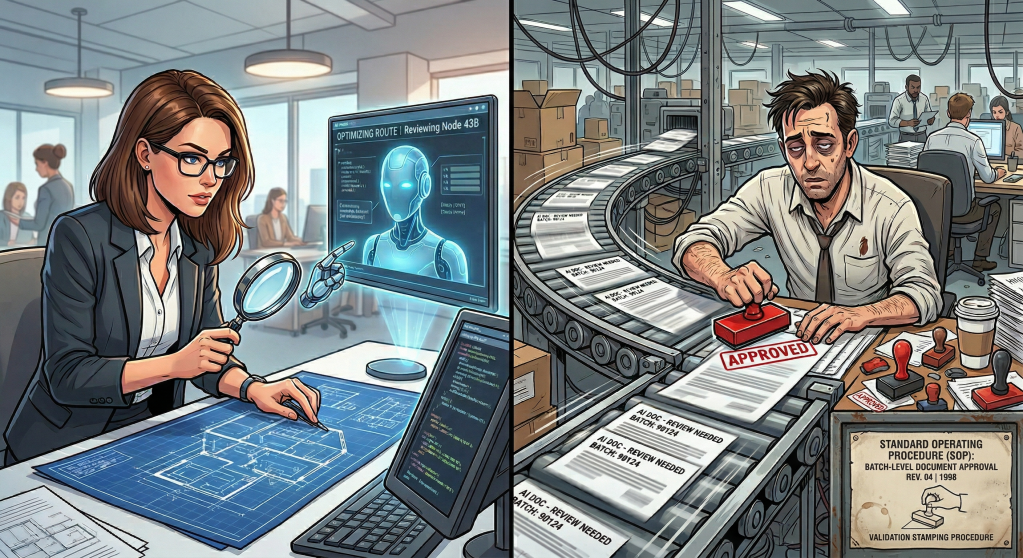

The common remedy for validating high-value outputs is HITL or human-in-the-loop. The agent does the initial prep work and pulls your sleeve when it’s time to make a decision, whether it’s to incorporate new information, code or other outputs into an existing corpus of work. The idea is to have humans use their expertise to check, polish and finalize AI outputs.

Human-in-the-loop is a beautiful concept – human and machine working together, both doing what they do best. It also has several critical flaws which I predict will become truly apparent going forward.

Grappling with externalized thought

Let’s get one thing straight: our brains are hard-wired to avoid costly cognitive effort whenever possible.

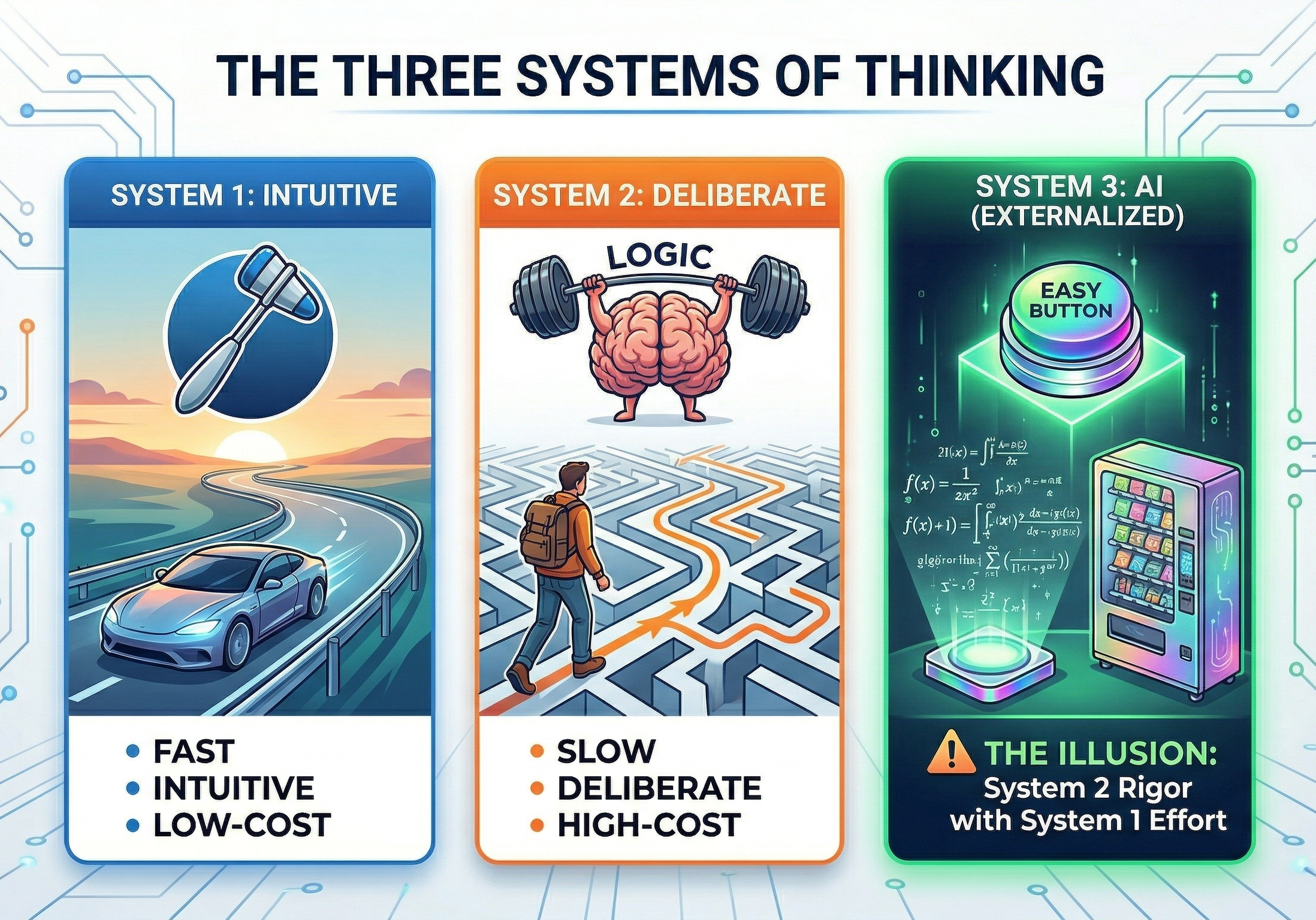

We prioritize intuitive answers, mental shortcuts and other aids to minimize the strain and stress of racking our grey matter for answers and analysis. This usually happens without a conscious decision. We can identify two distinct modes of thinking, which psychologist Daniel Kahneman calls System 1 and System 2.

System 1 – Intuition

System 1 is our intuitive, low-cost daily driver. We use it for easy, routine tasks like:

- Driving a car on an uncongested highway

- Answering simple questions like “What is 1+1?”

- Detecting obvious social cues like hostility or excitement in other people’s tones and postures

- Scribbling your signature on a form for the 100th time

System 2 – Deliberation and analysis

System 2 is the costly but powerful cognitive engine we use sparingly for things such as:

- Navigating in an unfamiliar environment

- Grappling with complex problems, such as planning a demanding new project at work

- Overriding instinctive urges to act in specific ways to account for the requirements of professional, cultural and other special contexts

- Filling out an official form you’ve never seen before

When we encounter problems that seem to require effort from System 2, we instinctively look for System 1 level shortcuts first to avoid the unwanted strain, such as…

- Authority bias aka trusting the source – Instead of assessing the information in detail, we make a snap judgment on the system or person delivering it. Does the person seem confident and authoritative? Are they (or is the source system) already trusted by others I know?

- This is why medical ads have actors in white lab coats pushing products.

- It is also why an officially approved agent explaining unfamiliar concepts to you at length is easy to take at face value.

- The Fluency Heuristic – We tend to bypass validating well-formatted outputs that are effortless to ingest, regardless of whether they’re incomplete, lacking important nuance or totally false.

- Social proof & the Bandwagon effect – When faced with difficult decisions involving multiple factors, we tend to favor choices we know others in similar situations have already made. Basically, we assume that if most folks already chose a certain way, then we’re safe in making the same bet. This lets us skip assessing the available options in more detail, which requires System 2 processing.

System 3 – Externalized reasoning

Agentic AI services and deep reasoning capabilities are so alluring and addictive exactly because they seem to offer a new, externalized System 3, combining the quality and rigor of System 2 outputs with the ease and intuitiveness of System 1.

I suggest AI services have rapidly become ubiquitous because they exploit this built-in tendency for effort minimization – they’re essentially a System 1 cognitive shortcut on steroids, impossible for our lazy brains to ignore.

Sure, we’ve offloaded cognitive effort to external systems before – just think of calculators, Wikipedia or Google Search for instance. What’s new with AI is the depth and breadth of answers on offer, presented in an authoritative, conversational style.

For human-in-the-loop to truly work and for us to be more than mentally impotent, ceremonial rubber stamps for agentic outputs, our organic System 2 has to constantly evaluate System 3’s products instead of simply trusting them outright.

Here’s why I think the deck is currently stacked against that happening.

Let’s get real about human nature

Expecting people to allocate a constant, unwavering degree of cognitive processing power to AI-generated content validation requires an idealistic, reductionist view of human behavior.

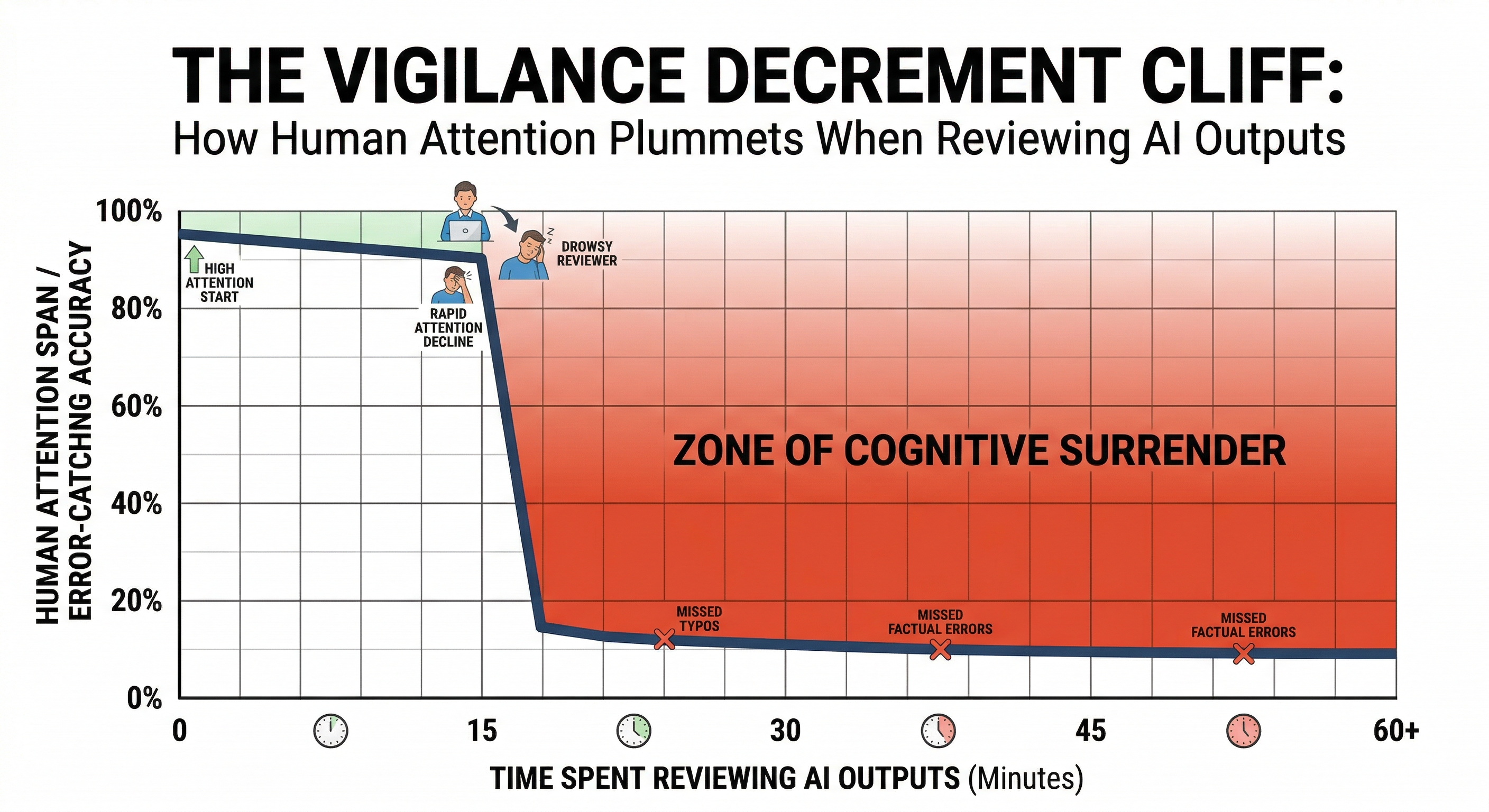

For example, the vigilance decrement (first studied by Norman Mackworth in 1948) is a well-known phenomenon in which our ability to monitor repeated batches of monotonous information for anomalies and errors rapidly decreases over time spent on task.

While this effect isn’t as strong when evaluating a single document or other AI-generated output, it starts to become relevant if ways of working shift so that AI becomes the primary content generator, with humans curating and compiling machine outputs.

When there’s an ongoing high rate of cognitive processing required with low chance of finding anomalies, we essentially start to tune out and our usefulness as an effective control gate quickly erodes – further degraded by conditions such as lack of sleep, irregular mood, hunger and more.

Studies going back to the first half of the 1970s already showed that our vigilance starts to degrade within the first 15 minutes of sustained focus, regardless of the level of expertise of the individual. Imagine what this means for doing full AI-assisted workdays of mostly validating a constant stream of information.

We’re also affected by cognitive biases such as automation bias (which I already discussed years ago here) that make us treat machine-generated information – especially when it’s complex and taxing to validate – more favorably and with less suspicion than that produced by humans.

Recent research from the University of Pennsylvania succinctly coined the expression cognitive surrender for this predicted outcome – mass adoption of AI outputs with minimum evaluation, overriding both System 1 (intuition) and System 2 (deliberation and analysis).

The reviewed studies found that:

(…) participants with higher trust in AI and lower need for cognition and fluid intelligence showed greater surrender to System 3.

Are we screwed?

For clarity, I’m far from an AI doomer. In fact, I routinely use several different commercial AI products every week (Microsoft’s M365 Copilot & Copilot Studio, Anthropic’s Claude and Google’s Gemini) to help out with a range of professional and personal tasks, as well as an assortment of locally run models through Ollama. When used thoughtfully, these tools can be awesome force multipliers.

They’re here to stay.

Even then, we can’t bow to obvious commercial incentives by simply ignoring how human cognition really works. Mostly I’m worried about building mission-critical workflows that hinge on human-in-the-loop as the defining control.

While I certainly couldn’t identify a silver bullet that fixes everything, some ideas are being kicked around that I think might have merit in at least partially helping manage the problem.

One of these is adding confidence scoring for AI outputs along with some sort of UX friction before allowing utilization of less-than-stellar confidence outputs by us humans. The counter-problem with this is that we adapt to inconvenience remarkably well – for instance, think about how rapidly you yourself handle the “do you want to accept cookies?” banners on websites.

Another – and probably the way we’ll have to eventually go – is developing and adopting specialized adversarial agents and multi-agent systems at scale to evaluate and poke at all outputs. This essentially means AI auditing AI. Humans could then only be brought in to settle high-value “disputes” between the two systems, which would help bypass our limited ability to stay vigilant.

I’ve also heard initial tales of companies experimenting with stress testing their HITL capability by deliberately adding “honey data” to select business documents involved in processes and seeing whether end users uncritically include the faulty information in AI-assisted work products that are supposed to be validated by humans.

The challenges are real, and we’ll have to find robust answers relatively soon. Let’s get the discussion started so we aren’t caught with our metaphorical pants down.